Outcomes Don’t Stick Without Glue (Work)

Why AI for efficiency is only half the solution....

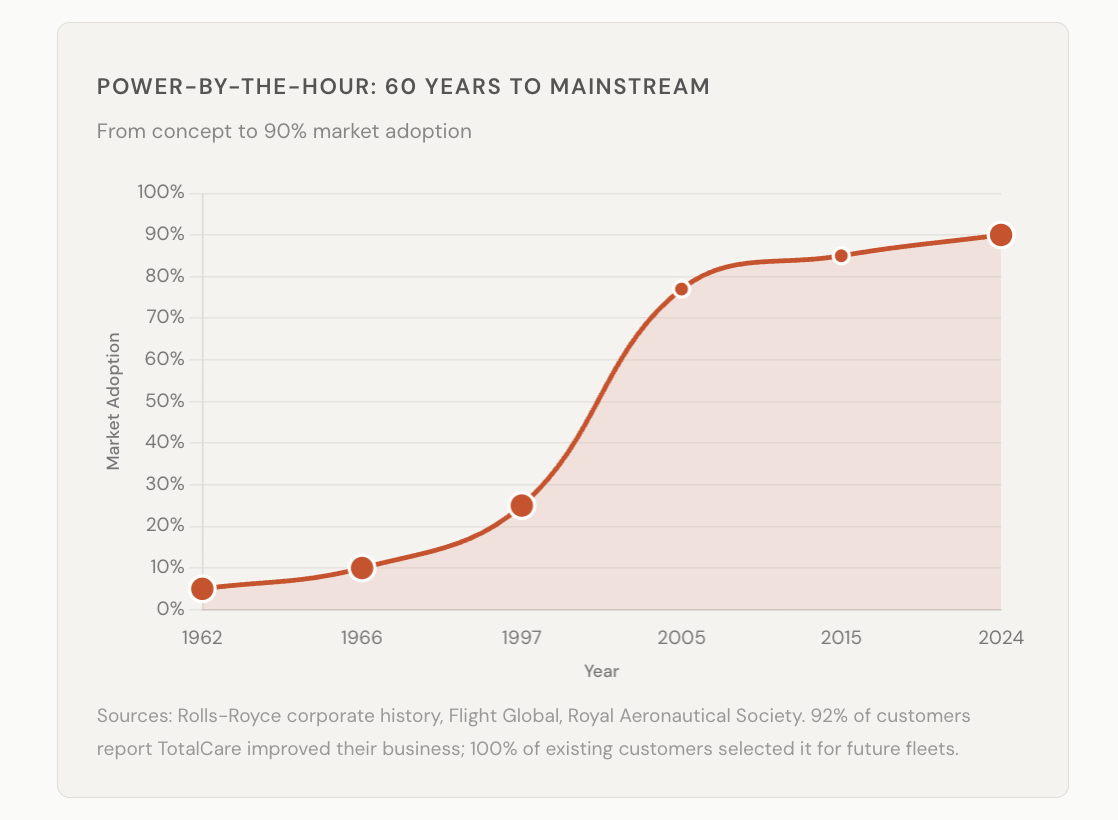

In 1962, Rolls-Royce figured something out that most businesses are exploring now….

Their Viper engines powered business jets, but reliability was poor and airlines were frustrated. The standard fix would have been better maintenance contracts — bigger invoices when things break.

Instead, they started charging a fixed rate per flying hour. If the engine didn’t fly, they didn’t get paid. They called it Power by the Hour.

One change to the pricing model and suddenly the manufacturer and the airline wanted exactly the same thing: keep the engine in the air.

Today their TotalCare programme manages thousands of engines on contracts lasting up to 27 years. They’ve extended service intervals by 25% and recover 95% of used engine materials. Civil aerospace operating margins hit 16.6% in 2024, up from 2.5% two years earlier. The share price roughly doubled in each of the last three years.

They didn’t just change how they charged. They changed what they sold. Not engines. Flight hours.

Most businesses are still selling engines.

The effort gap

There’s a reason it took sixty years for anyone to properly follow Rolls-Royce’s lead. An economic theory called Baumol’s Cost Disease, described in the same decade, explains why: services can’t improve productivity the way manufacturing can. A string quartet still needs four musicians.

For sixty years, this held. Service costs kept rising while manufacturing costs fell. SaaS companies charged per seat because the cost of delivering the service scaled with headcount.

AI is changing this, but not in the way most people frame it. The conversation around AI tends to be about productivity — do more, faster, with fewer people. That’s an output story. The more interesting shift is what happens to the gap between effort and outcome.

When it costs $15 of human effort to resolve a customer support ticket, you price on inputs — seats, hours, headcount — because the effort is the cost. When AI compresses that effort to near zero, the effort stops being the thing worth pricing. The outcome is.

That compression is what makes outcome-based pricing possible in industries where it wasn’t before. This isn’t a theoretical point. It’s already happening.

$0.99 per resolution

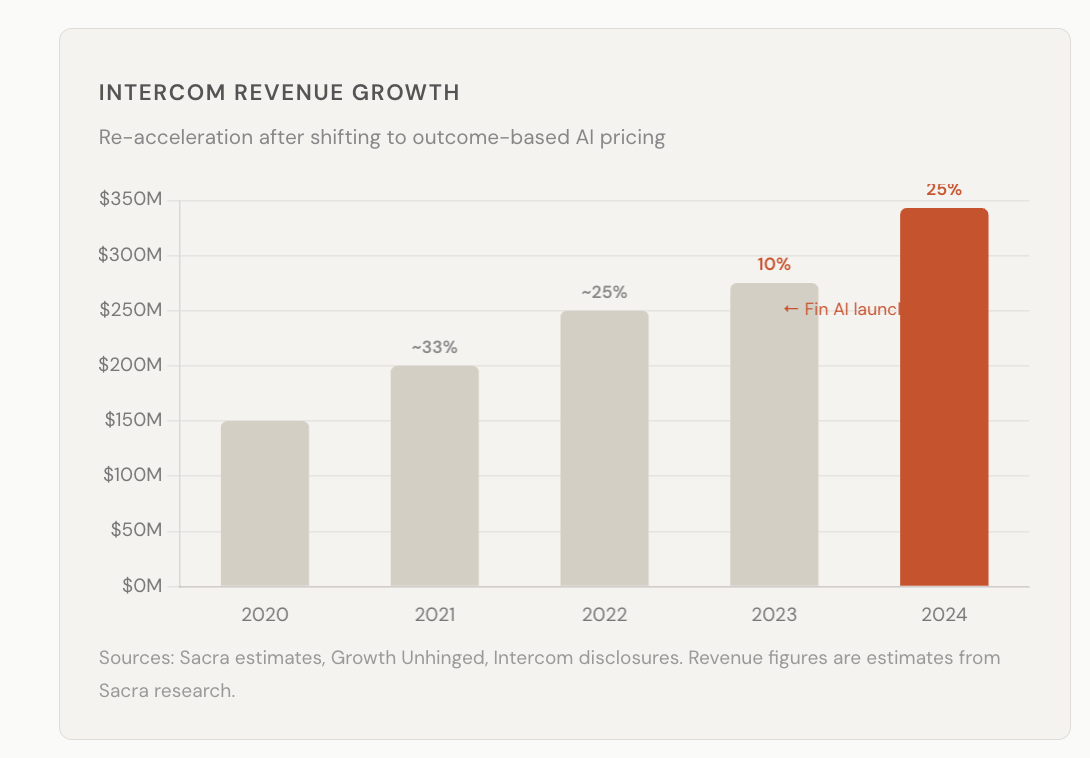

When Intercom launched their AI agent Fin in early 2023, they abandoned their own per-seat pricing and started charging $0.99 per resolved customer conversation.

The logic was simple. If AI could resolve a support ticket without a human, why charge for humans? And if you’re confident your AI actually resolves things, why not tie your revenue directly to proof of that?

Intercom’s revenue re-accelerated to 25% growth in 2024, up from 10% the year before. Fin now resolves over a million tickets a week — equivalent to the output of 6,500 human agents. The average customer sees a 56% autonomous resolution rate, more than double where it started.

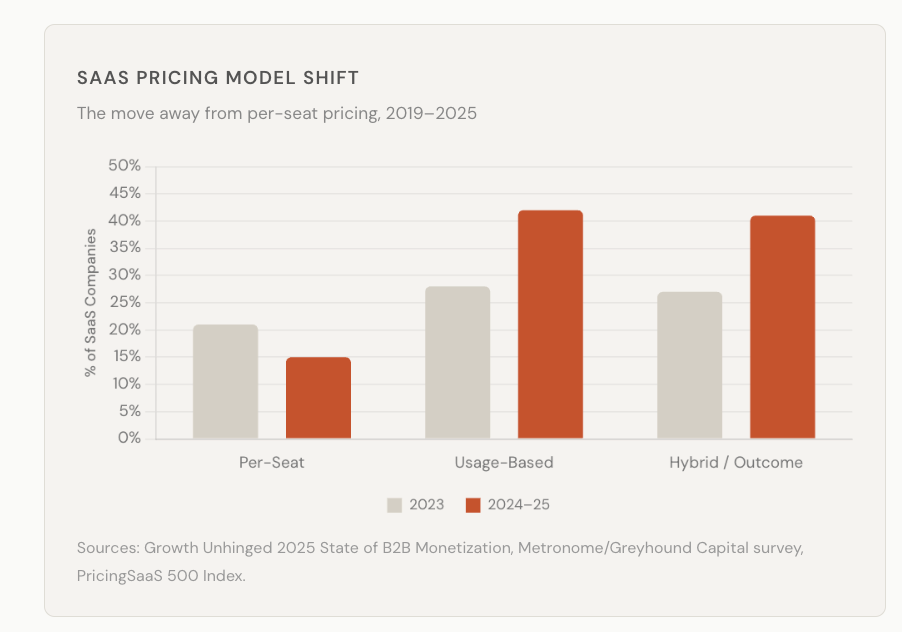

The broader industry noticed. Seat-based pricing dropped from 21% to 15% of SaaS companies in twelve months. Hybrid and outcome-based pricing surged from 27% to 41%. Credit-based models more than doubled, with Figma, HubSpot, and Salesforce all adopting them in 2025.

But here’s the part that matters for the argument: Intercom didn’t just get more productive. They compressed the effort-to-outcome gap so far that they could price on the outcome itself. That’s the thing Baumol said couldn’t happen in services. AI didn’t just make support cheaper. It made support priceable on results.

Where the risk is shifting

Intercom wasn’t the only one watching. Zendesk followed in late 2024 with its own per-resolution model — $1.50 per AI-resolved ticket. The framing was explicit: outcome-based pricing, where the vendor only gets paid when the customer’s problem actually gets solved.

What’s interesting is where this logic is spreading beyond customer support.

Riskified, an ecommerce fraud prevention company, has been running a full outcome-based model for years. They analyse transactions in real time and provide instant approve/decline decisions. If they approve a transaction that later turns out to be fraudulent, they cover the chargeback — 100% liability. They only charge for orders they approve and guarantee. The incentive structure is total: if their AI is wrong, they pay.

This is shared-risk pricing. And according to PwC, 43% of enterprise buyers now consider a vendor’s willingness to share risk a significant factor in purchase decisions. That number was negligible five years ago.

The pattern is showing up in sectors you might not expect. In legal, 40% of law firm respondents in a Thomson Reuters survey said they believe AI will accelerate the shift to alternative fee arrangements — away from billable hours, toward fixed or outcome-based pricing. The reality hasn’t caught up yet (only 9% report actually seeing that shift), but the expectation is loud. When a partner at an AmLaw 100 firm tells Harvard researchers that there’s a “structural incompatibility” between AI-driven productivity and hourly billing, something is moving.

Healthcare is further along. Value-based care — where providers are paid for patient outcomes rather than procedures performed — grew 25% in participation between 2023 and 2024. The market is projected to grow from $12 billion in 2023 to over $43 billion by 2031. AI is accelerating this because it makes outcomes measurable at scale: remote monitoring, risk stratification, predictive diagnostics. When you can track whether a patient’s condition actually improved, you can price on whether it did.

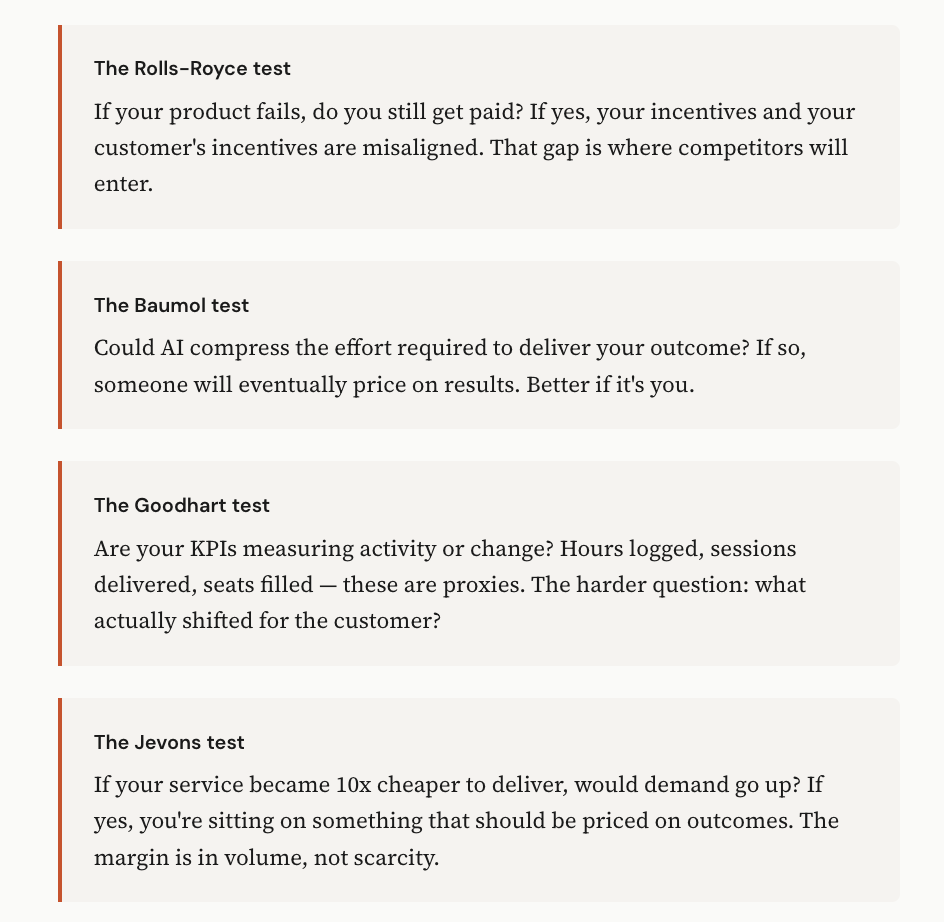

The through-line across all of these is the same. AI compresses the effort required to deliver a measurable outcome. That compression makes outcome-based pricing economically viable. And once one competitor in a category prices on outcomes, everyone else has to explain why they’re still charging for inputs.

Buy light, not lightbulbs

This isn’t only a software story.

Around 2012, the architect Thomas Rau went to Philips with an unusual request. He didn’t want to buy lightbulbs for his Amsterdam office. He wanted to buy light.

“I want to buy light, and nothing else. If you think you need a lamp, or electricity, or whatever — that’s fine. But I want nothing to do with it.”

Philips built it. They called it Pay per Lux. The customer pays for the light consumed. Philips retains ownership of all the hardware. They design it, install it, maintain it, and take everything back when the contract ends.

Schiphol Airport adopted it early. Energy consumption dropped 55%. And because Philips retained ownership of the materials, they had every incentive to design for durability rather than obsolescence.

Same incentive flip as Rolls-Royce. When you sell lightbulbs, you profit when they burn out. When you sell light, you profit when they don’t.

Signify (the company Philips Lighting became) now generates over €6 billion in annual revenue. The lighting-as-a-service model hasn’t replaced product sales entirely, but it’s driven the strategic shift toward connected systems and recurring revenue — the parts of the business where margins are strongest.

Paying for presence

Every outsourced function has a structural problem: the buyer can’t fully observe the seller’s effort. You pay for hours because hours are visible. You pay for seats because seats are countable. The actual outcome is harder to measure, so you settle for proxies.

The trouble with proxies is Goodhart’s Law: when a measure becomes a target, it stops being a good measure. SaaS companies optimised for monthly active users, not whether anyone got value. The proxy became the product.

Outcome-based pricing solves this structurally. When Rolls-Royce only gets paid for flying hours, you don’t need to audit their maintenance. When Riskified takes 100% liability for fraud, you don’t need to evaluate their algorithms. The incentive does the work that measurement never could.

What happens to the people

This is all economics. But people work inside economics.

The billable hour is dying. Not because clients complained — they’ve complained for decades. It’s dying because AI made the absurdity visible. When a contract review that took 40 hours takes 40 minutes, billing by the hour stops making sense. A December 2025 Wall Street Journal analysis put it bluntly: the billable hour “incentivises inefficiency.” When machines handle the grind, human expertise must command premiums through results, not time.

The same compression is happening everywhere professionals sell effort. PR specialists. Management consultants. Accountants. Designers. Developers. The Clio Legal Trends Report found 74% of hourly legal work — information-gathering, analysis, document review — is automatable. That’s not a warning about job losses. It’s a statement about where human value actually lives.

And it’s changing how people work.

LinkedIn profiles mentioning “fractional” roles went from 2,000 in 2022 to 110,000 in 2024. The number of fractional executives doubled from 60,000 to 120,000 in two years. These aren’t people between jobs. 73% have 15+ years of experience. They’re senior professionals who’ve worked out something important: if you’re good enough to deliver outcomes, you don’t need to sell hours.

The fractional model is outcome-based pricing for people. A fractional CMO doesn’t get paid to sit in meetings — they get paid to build the system that creates growth. A fractional CFO doesn’t bill for spreadsheet time — they get paid to extend runway or close a round. The engagement is scoped around results, not presence.

By 2027, Gartner forecasts that over 30% of mid-size enterprises will have at least one fractional executive. Korn Ferry found 37% of mid-sized firms plan to employ fractional or interim executives by mid-2026 — up from 12% in 2020. This isn’t a gig economy story about driving Ubers. It’s senior talent reorganising around outcomes.

What gets compressed isn’t expertise. It’s the overhead of selling expertise: the full-time seat, the benefits package, the annual salary negotiation, the pretence that a CMO needs to be in one building for 2,000 hours a year. AI handles the routine. Fractional handles the flexibility. What remains is the judgment that actually matters.

The World Economic Forum projects 170 million new jobs by 2030, with 92 million displaced — a net gain of 78 million positions. McKinsey found that 70% of skills employers want today are used in both automatable and non-automatable work. The overlap is the point. The skills don’t disappear. They get applied differently, to harder problems, measured on results.

This is the human corollary to Rolls-Royce’s insight: when you can measure outcomes precisely, you stop paying for proxies. Including the proxy of showing up.

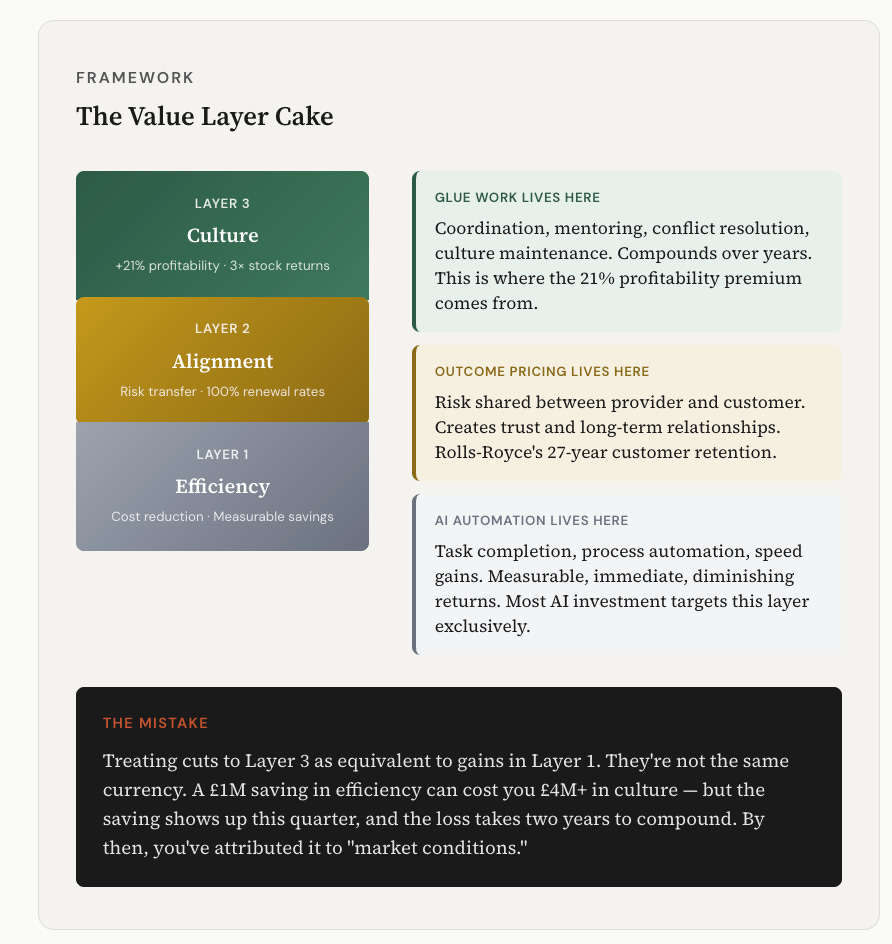

But there’s a tension here worth naming. Not everything that matters can be measured. Tanya Reilly coined the term “glue work” for the less glamorous labour that makes teams function: the coordination, the conflict resolution, the onboarding, the culture maintenance. It’s valuable but rarely valued. And it falls disproportionately on women and people of colour — precisely because it’s invisible to metrics.

Outcome-based models risk amplifying this problem. When the fractional CMO gets paid for pipeline growth and the fractional CFO gets paid for runway extension, who gets paid to hold the organisation together between outcomes? The research suggests: often no one, explicitly. Gartner predicts that by 2026, 20% of organisations will use AI to eliminate more than half their middle management roles. But middle managers do more than coordinate — they mentor, they mediate, they maintain culture. Those functions don’t disappear when the role does.

The honest answer is that outcome-based pricing works best for work that produces measurable results. For everything else — the glue, the culture, the human infrastructure — we haven’t yet figured out how to price it. Perhaps we won’t. Perhaps some work will always need to be paid for on presence, because presence is the point.

What’s changing isn’t that all work becomes outcome-based. It’s that the work which can be measured on outcomes increasingly will be. The rest will need to be valued differently — recognised, compensated, protected from the efficiency logic that treats anything unmeasurable as dispensable.

The measurement paradox

Here’s what makes this uncomfortable: the data on culture’s value is unambiguous. Gallup finds companies with strong cultures see 21% higher profitability and 17% higher productivity. Great Place to Work research shows they triple stock market performance. Strong culture companies see up to 85% revenue increases. Disengaged employees cost 34% of their salary in lost productivity — globally, that’s $438 billion annually.

So the question becomes: if you shift to outcome-based pricing and cut the glue work that maintains culture, are you optimising for one metric while destroying another? The HBR research on layoffs is instructive. Culture Amp analysed 146 companies that went through workforce reductions between 2020 and 2022. Company confidence dropped 16.9 percentage points. Belief in career opportunities dropped 12.1 points. Confidence in leadership dropped 10.5 points. Recovery took 12–18 months — and that was only if roles were backfilled. For 2023 layoffs, recovery is tracking at 18–24 months.

The companies with the highest pre-layoff engagement saw the biggest drops. The very thing you built becomes the thing you lose the most of.

What’s missing is a framework that compares these numbers. When a company eliminates middle management to cut costs, what’s the actual P&L impact when you account for the 21% profitability differential from culture degradation? When fractional executives replace full-time leadership, what’s the net effect on the 17% productivity premium? No one is doing this maths publicly. The efficiency gains are measured in spreadsheets. The culture losses show up in turnover, disengagement, and institutional knowledge walking out the door — metrics that take years to compound and are easy to attribute to something else.

Organisational Network Analysis is starting to fill this gap. Rob Cross’s research found that 3–5% of people in a typical network account for 20–35% of value-adding collaborations. But here’s the kicker: when Cross compared his list of these hidden connectors against companies’ lists of “top talent,” there was less than 50% overlap. Half the people holding organisations together aren’t being identified, recognised, or protected.

Some companies are building measurement systems. The Glue Work Framework offers tools to integrate invisible work into career ladders and performance reviews. Peer-nominated recognition programs ask “who helped you succeed this quarter?” rather than measuring individual output. ONA maps information flows and trust networks to reveal who actually makes collaboration work. But these are emerging practices, not standard ones.

What AI could do (but isn’t yet)

The conversation about AI and work focuses almost entirely on task automation — what work AI can replace. There’s a different question worth asking: could AI amplify glue work rather than eliminate it?

Consider what glue work actually involves: knowing who to connect with whom, remembering what matters to people, tracking commitments across conversations, sensing when someone is struggling before they say so, translating between teams that speak different professional languages, maintaining the institutional memory that makes coordination possible. These are pattern-matching problems layered on top of emotional intelligence.

AI is already doing pieces of this — meeting summaries, action item tracking, sentiment analysis, translation. Microsoft’s Teams AI can detect rising tension in meetings and suggest de-escalation. Sentiment analysis tools can flag burnout signals before someone quits. Calendar analysis can identify people who are becoming isolated. But these are sold as “productivity tools” and “workflow automation.” No one is calling it what it is: glue work at scale.

A 2025 longitudinal study of software teams found something interesting. In 2023, participants imagined AI would become “agentic systems that could coordinate projects, mediate conflicts, or anticipate team needs.” By 2025, what they actually had were individual productivity tools — ChatGPT, Copilot, Claude. The coordination layer hadn’t materialised. AI helped people work faster in isolation. It didn’t help them work better together.

The opportunity sitting in plain sight: AI that doesn’t just automate coordination tasks, but amplifies the human capacity to maintain relationships, culture, and trust. AI that notices the new hire who hasn’t been onboarded into the informal knowledge network. AI that identifies the person doing 200 hours of non-promotable work and surfaces it to leadership. AI that tracks not just what got done, but who made it possible for others to do their work.

This isn’t science fiction. The components exist. What’s missing is the framing. We’re building AI for efficiency when we could be building AI for connection. We’re measuring AI’s impact on individual productivity when we should be measuring its impact on collective capability. We’re treating glue work as overhead to be eliminated when it’s infrastructure to be strengthened.

The companies that figure this out first will have a structural advantage — not because they cut the most costs, but because they maintained the culture that makes outcomes possible in the first place.

What this means in practice

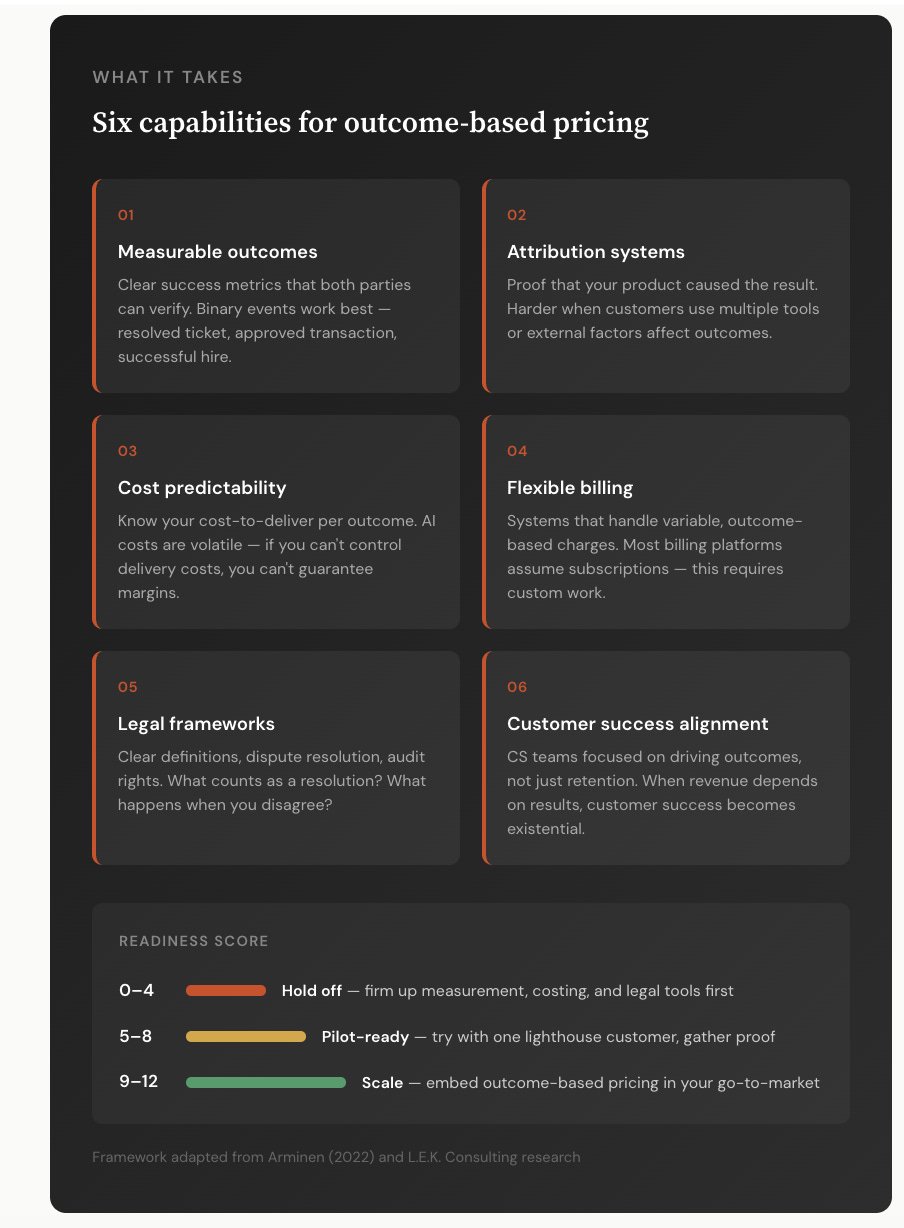

If you’re building a business, running a team, or pricing a service, there are four questions worth sitting with.

None of this means input-based pricing vanishes overnight. But the direction is set, and the companies that figured it out earliest are building 27-year customer relationships while everyone else renegotiates annual renewals.

AI will crush the padding that many businesses rely on - so we have to figure out how to use it efficiently while retaining the Glue Work - otherwise we are trading off short term gains for long term value destruction.

Superb angle on the tension between measurablity and value. The Rolls-Royce parallel is clean, but the glue work section realy lands diferently, Rob Cross's stat that half the connectors aren't even on the 'top talent' list is wild. We're optimizing for metrics that miss what actually makes delivery possible, which is probably why so many 'efficient' orgs feel fragile.